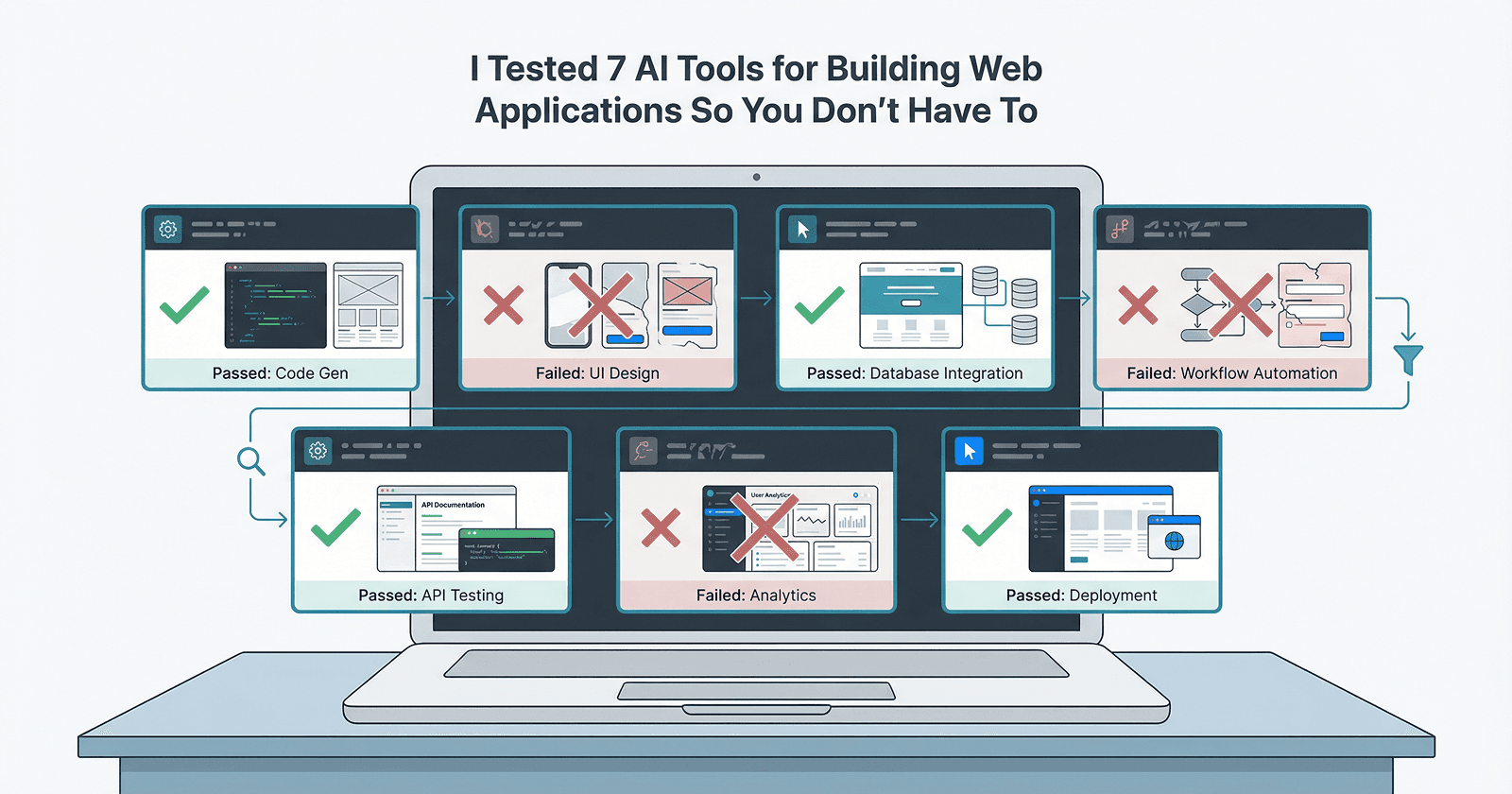

I Tested 7 AI Tools for Building Web Applications So You Don't Have To: What Actually Works and What's Overhyped

I’m Sasindu, a dev who experiments with AI tools. On ToolPlot, I share practical insights, honest reviews, and real-world tips so developers don’t waste time on overhyped tools.

The AI tool discovery process for web development is fundamentally broken.

You've seen the lists. "Top 10 AI Tools That Will Change How You Code Forever." "This AI Built an Entire SaaS in 5 Minutes." "Why Developers Are Terrified of This New Tool." They're all recycling the same promotional copy, written by people who clearly haven't spent more than twenty minutes with the product.

I've been building web applications for over a decade. I've shipped products that handle real traffic, real edge cases, and real money. When AI coding tools started proliferating, I was skeptical but curious. Could these tools actually speed up the unglamorous work of building production applications, or were they just impressive demos?

So, I spent the last month actually using seven of the most talked-about AI tools for web development. Not watching YouTube tutorials. Not reading marketing sites. Actually, building things: a task management application, a content dashboard, an API integration layer, and several smaller components.

This article documents what I found. Some tools impressed me. Most didn't. None of them work the way their marketing suggests.

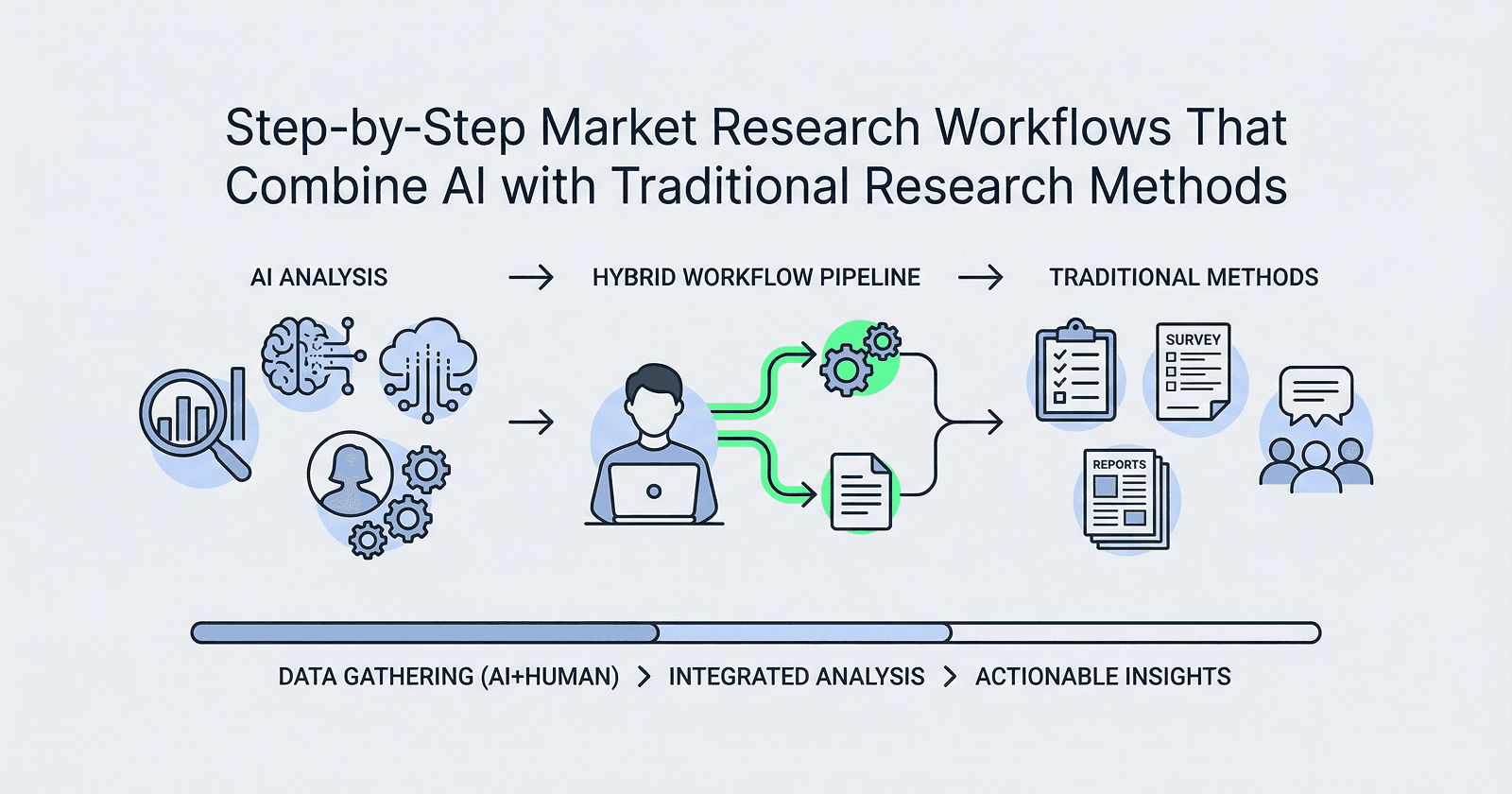

Testing Framework

I didn't evaluate these tools based on vibes or how impressive their demos looked. I built the same set of features with each one and tracked specific metrics.

What I built with each tool:

A task board with drag-and-drop functionality, user authentication, real-time updates, and data persistence. Not a Todo list. An actual multi-user application with the kinds of requirements you encounter in real projects.

Evaluation criteria:

Setup time: How long from account creation to writing actual code? This includes authentication, environment configuration, and understanding the tool's workflow.

Learning curve: How much mental overhead does this tool add? Does it introduce new abstractions I need to learn, or does it work with patterns I already know?

Code quality: Is the generated code something I'd accept in a code review? Does it follow current best practices, or does it generate patterns that were outdated three years ago?

Handling real requirements: Most tools can generate a login form. Can they handle "users should be able to reset passwords, and the reset link should expire after two hours, and we need to log all authentication attempts for security auditing"?

Iteration and debugging: When something breaks or needs to change, how painful is it? Can I understand what the tool generated well enough to fix it myself?

Who this is actually for: Every tool has an ideal user. I tried to identify who that person actually is, not who the marketing claims it is.

I gave each tool a fair shot. I read documentation. I watched official tutorials. I joined Discord servers and read what real users were saying. This wasn't a surface-level test.

The 7 AI Tools Tested

1. Cursor

What it claims to do: An AI-first code editor that predicts what you want to write and helps you code faster through chat and inline suggestions.

What actually worked well:

The inline autocomplete is legitimately useful. It's not just predicting the next line; it understands context well enough to generate entire function implementations that are usually 70-80% correct. When refactoring, I could highlight a block of code, describe what I wanted to change, and it would handle the mechanical transformation accurately.

The chat interface with codebase context is where Cursor shines. I could ask "where is the authentication logic happening?" and it would point me to the right files with relevant code snippets. For navigating unfamiliar codebases, this is valuable.

What failed or felt overhyped:

It's still fundamentally a text editor with AI bolted on. The AI doesn't understand your application architecture or make architectural decisions. It autocompletes code, which is useful, but it's not "AI pair programming" in any meaningful sense.

The suggestions can be confidently wrong. It will generate plausible-looking code that contains subtle bugs or uses deprecated APIs. You need to know enough to catch these errors, which means you're still doing full code review on everything it generates.

The pricing is aggressive for what you get. You're paying for VS Code plus API calls to Claude/GPT-4.

Who should use it:

Experienced developers who type a lot of boilerplates and want to move faster on mechanical tasks. People comfortable reviewing and correcting AI-generated code.

Who should avoid it:

Beginners who need to develop judgment about what good code looks like. Teams with tight budgets who can't justify the monthly cost per developer.

Verdict: Worth using but understand you're paying for a faster autocomplete and better code search, not an AI teammate.

2. v0 by Vercel

What it claims to do: Generate production-ready React components from text descriptions using AI, optimized for Next.js and Vercel's ecosystem.

What actually worked well:

For UI components, v0 is impressively fast. I described a pricing table with three tiers, feature comparisons, and toggle between monthly/annual pricing. It generated clean React code with Tailwind styling that looked modern and worked immediately.

The iteration workflow is smooth. You can refine outputs through conversation, and it maintains context about what you're building. The generated code uses current React patterns (hooks, proper state management) and the Tailwind is actually readable.

What failed or felt overhyped:

It's a component generator, not an application builder. It has no concept of routing, state management across pages, API integration, or database design. You get isolated components that you then need to integrate into an actual application yourself.

The components are often over-engineered for simple use cases and under-engineered for complex ones. A basic button component might include accessibility features you don't need, while a complex form won't include proper validation or error handling.

It's locked into the Vercel ecosystem. Everything assumes Next.js, Tailwind, and Vercel deployment. If that's not your stack, most of the value disappears.

Who should use it:

Next.js developers who need to quickly prototype UI components and are comfortable integrating them into a larger application. Teams already on Vercel's platform.

Who should avoid it:

Anyone not using Next.js. Backend developers. People who need full application generation, not just components.

Verdict: Situational. If you're already building with Next.js and Tailwind, it's a useful accelerator for UI work. Otherwise, skip it.

3. Bolt.new

What it claims to do: Build and deploy full-stack web applications through conversation, with in-browser development environment and instant previews.

What actually worked well:

The demo experience is genuinely impressive. You can go from idea to working prototype faster than any other tool I tested. The in-browser environment means zero setup time. You describe an app, and within minutes you're looking at something that actually runs.

For throwaway prototypes and quick experiments, Bolt.new delivers. I built a simple expense tracker in about fifteen minutes. It had a clean UI, local storage persistence, and worked well enough to show someone as a proof of concept.

What failed or felt overhyped:

The moment you need to do anything real, the limitations become painful. You can't easily export your code to a proper development environment. The in-browser IDE is cramped and missing basic features. Debugging is primitive.

It makes architectural decisions for you, and they're usually wrong for anything beyond a prototype. Everything lives in a few files. There's no proper separation of concerns. The database layer is whatever quick solution the AI chose, not what you'd actually use in production.

Iteration is frustrating. If you want to change something fundamental about the architecture, you're often better off starting over than trying to redirect the AI.

Who should use it:

Non-developers who need to validate an idea quickly. Developers who want to sketch out a UI concept before building it properly. People comfortable treating the output as a disposable prototype.

Who should avoid it:

Anyone who wants to build something they'll actually maintain. Teams that need version control, proper testing, or deployment to their own infrastructure.

Verdict: Overhyped for serious development work, but genuinely useful for rapid prototyping if you understand you're building a throwaway.

4. GitHub Copilot

What it claims to do: AI pair programmer that suggests code as you type, trained on billions of lines of public code.

What actually worked well:

Copilot is the most mature tool in this category, and it shows. The suggestions are more contextually aware than they were two years ago. It's particularly good at generating test cases, writing documentation, and handling repetitive patterns.

The workspace context feature (when it works) can suggest relevant code from across your entire project, not just the current file. This makes it better at maintaining consistency with your existing patterns.

It stays out of your way. Unlike some other tools, it doesn't interrupt your flow with chat interfaces or require you to context-switch. It makes suggestions; you accept or ignore them.

What failed or felt overhyped:

It's still fundamentally autocomplete. The "pair programmer" framing is marketing. It doesn't understand your requirements, review your architecture, or catch logic errors. It completes the code you started writing, sometimes accurately.

The suggestions often feel like they're from someone who skimmed the project yesterday. They'll use the right general pattern but wrong specific details. Variable names that almost match your conventions. Imports that are close but incorrect.

You develop a muscle memory of Tab-Tab-Edit, accepting suggestions and immediately fixing the small errors. Whether this is faster than just writing it yourself is debatable.

Who should use it:

Developers who write a lot of boilerplate or work in verbose languages. Teams that are already on GitHub and want to try AI assistance without changing their workflow.

Who should avoid it:

Beginners who need to develop good coding instincts. People who find autocomplete suggestions distracting rather than helpful.

Verdict: Worth using if you're already comfortable with your development workflow and want marginal speed improvements. Not transformative.

5. Replit Agent

What it claims to do: An AI agent that can build full applications autonomously, handling everything from setup to deployment in Replit's cloud environment.

What actually worked well:

For educational projects and simple scripts, Replit Agent is surprisingly capable. I asked it to build a URL shortener, and it scaffolded a working Node.js app with Express, set up a simple database, and deployed it. The whole process took about ten minutes.

The agent can handle multi-step tasks. It installs dependencies, creates files, writes code across multiple files, and can iterate based on error messages. It's the closest thing to "write my app for me" that actually sort of works.

The Replit environment is genuinely good for learning and experimentation. Instant preview, collaborative editing, and simple deployment make it easy to share what you're building.

What failed or felt overhyped:

The agent makes rookie mistakes constantly. It forgets to handle edge cases, uses deprecated packages, and creates security vulnerabilities. I found hardcoded credentials, missing input validation, and SQL injection vulnerabilities in code it generated.

It can't handle complex requirements. Any task that requires understanding tradeoffs or making architectural decisions either produces garbage or gets stuck in loops trying different approaches.

The "autonomous agent" framing is misleading. You need to supervise it constantly, catch its errors, and redirect it when it goes off track. It's less like having a junior developer and more like having an intern who needs constant guidance.

Who should use it:

Students learning web development. Hobbyists building simple projects. Teachers who want to quickly demonstrate concepts.

Who should avoid it:

Anyone building production applications. Developers who care about code quality or security. Teams that need code they can maintain long-term.

Verdict: Overhyped as a development tool, but has legitimate educational value. Treat it like a fast tutorial generator, not a development assistant.

6. Claude with Artifacts

What it claims to do: Generate complete, working code artifacts that can be previewed and iterated on through conversation.

What actually worked well:

The artifacts feature creates genuinely useful, self-contained components. I built an interactive data visualization, a Markdown editor with live preview, and a basic spreadsheet interface. All worked immediately and the code quality was high.

Claude understands context better than most AI tools. I could reference previous artifacts, ask it to modify specific parts, and it would maintain consistency. The conversational interface feels natural for iterating on ideas.

For components that need to be self-contained (widgets, tools, interactive demos), this is one of the better workflows I've found. The code it generates is readable, follows modern best practices, and usually works without modification.

What failed or felt overhyped:

It's limited to single-file artifacts. You can't build multi-file applications. There's no state persistence beyond local Storage. You can't install custom packages beyond what's available in the sandbox.

The lack of version control or proper project structure means this is strictly for throwaway code or isolated components. Anything you build here needs to be manually copied into a real project.

It can't handle backend logic, databases, or API integrations in any real way. The artifacts are frontend-only, which limits what you can actually build.

Who should use it:

Developers who need to quickly prototype UI components or interactive demos. Technical writers creating code examples. Anyone building tools or visualizations that need to be self-contained.

Who should avoid it:

Teams building production applications. Anyone who needs backend logic, proper project structure, or version control.

Verdict: Situational. Excellent for its specific use case (self-contained interactive components), but don't expect it to build complete applications.

7. ChatGPT with Code Interpreter

What it claims to do: Write and execute code in a sandboxed environment, useful for data analysis, scripting, and quick prototypes.

What actually worked well:

For data manipulation and one-off scripts, Code Interpreter is genuinely useful. I uploaded CSV files, asked it to clean and analyze the data, and got working visualizations. For tasks that are more analysis than application, this is valuable.

The ability to execute code and see actual results is powerful. It can iterate based on errors, which means it can debug itself to some degree. This is more reliable than tools that just generate code without running it.

What failed or felt overhyped:

It's not a web development tool. You can't build applications with it. There's no persistent environment, no way to deploy what you create, and the sandbox is extremely limited.

The code it writes is often inefficient or uses outdated approaches because it's optimizing for "working" rather than "good." For learning or quick tasks, that's fine. For anything you plan to maintain, you'll need to rewrite it.

The session resets completely. You can't build on previous work across conversations, which makes it useless for iterative development.

Who should use it:

Data analysts and scientists. People who need quick scripts for one-off tasks. Non-developers who need to automate something simple.

Who should avoid it:

Web developers looking to build applications. Anyone who needs persistent environments or proper development workflows.

Verdict: Overhyped as a development tool, but useful for its actual purpose (data analysis and scripting).

Patterns and Hard Truths

After testing all seven tools extensively, some uncomfortable patterns emerged.

Most AI tools are optimized for demos, not development.

Every tool I tested performs best in the first five minutes. They can generate impressive prototypes quickly, which makes for great marketing videos. But the moment you need to iterate, handle edge cases, or integrate with real systems, the experience degrades rapidly.

This isn't an accident. These tools are built to impress people evaluating them, not to support people using them long-term. The demo is the product.

The "autonomous agent" framing is mostly marketing.

Several tools claim to be autonomous agents that can build applications for you. In practice, they're chatbots that generate code. The difference matters.

An autonomous agent would understand your requirements, make architectural decisions, handle errors gracefully, and produce production-ready code. What we actually have are sophisticated autocomplete systems that need constant human supervision.

The tools that are most honest about being assistants rather than agents are generally more useful.

AI is better at copying than creating.

All these tools are trained on existing code. They're very good at generating code that looks like something that already exists. They're much worse at solving novel problems or making architectural decisions that require understanding tradeoffs.

This means they excel at boilerplate, common patterns, and well-trodden paths. They struggle with anything that requires genuine creativity or domain-specific knowledge.

The integration tax is real.

Most tools introduce new abstractions, new workflows, or new environments. Learning these takes time. Integrating the generated code into your existing project takes time. Debugging when things go wrong takes time.

For simple projects, this overhead can exceed the time saved. You need to be working on projects of sufficient complexity for AI tools to provide net value.

Human developers are still irreplaceable for:

Understanding the actual problem you're trying to solve. Making architectural decisions that consider long-term maintainability. Debugging complex issues that require understanding system behavior. Reviewing code for security, performance, and correctness. Making tradeoffs between competing requirements.

The tools that acknowledge this and position themselves as assistants are more useful than the ones claiming to replace developers.

Code quality varies wildly, and you need expertise to judge it.

AI-generated code often looks correct but contains subtle bugs, security vulnerabilities, or performance issues. Catching these requires the same expertise you'd need to write the code yourself.

This creates a paradox: the tools are most useful for experienced developers who need them least, and least useful for beginners who need them most.

Final Verdict

Here's what actually works in 2026 for building web applications with AI:

Use AI tools as accelerators for tasks you already know how to do. They're useful for writing boilerplate, generating component variations, or handling mechanical transformations. They're not replacements for understanding web development.

Cursor or Copilot for day-to-day coding. If you're an experienced developer who writes a lot of code, these tools provide marginal but real speed improvements. The value compounds over time.

v0 or Claude Artifacts for UI prototyping. If you're in the Vercel ecosystem or need self-contained components, these tools can save significant time. Just understand their limitations.

Bolt.new or Replit Agent for throwaway prototypes only. They're useful for quickly validating ideas or creating demos, but don't try to build production applications with them.

Ignore the hype about autonomous agents. We're not there yet. What exists today are sophisticated assistants that need constant supervision.

My realistic workflow in 2026:

I use Cursor for my primary development work because the autocomplete and codebase navigation are genuinely helpful. When prototyping UI, I sometimes use v0 to generate initial components that I then refine. For everything else, I write code the traditional way because it's still faster and produces better results.

I spend less time typing boilerplate and more time on architecture, debugging, and understanding requirements. The AI tools haven't changed what skills matter; they've just shifted where I spend my time.

What I'm testing next:

I'm interested in tools that focus on specific, well-defined problems rather than trying to replace the entire development process. AI-assisted testing tools, intelligent debugging assistants, and architectural analysis tools seem more promising than general-purpose code generators.

I'm also watching tools that help with the parts of development that aren't coding: requirement analysis, API design, and documentation. These are areas where AI could provide real value without the code quality concerns.

The bottom line:

AI tools for web development are useful but overhyped. They work best as assistants for experienced developers, not as replacements for learning to code. The tools that acknowledge their limitations and focus on specific use cases are more valuable than the ones promising to revolutionize everything.

If you're looking for a tool that will build your application for you, you'll be disappointed. If you're looking for tools that can speed up specific parts of your workflow, there are real options worth considering.

Set your expectations accordingly.